The previous chapter, entitled An iOS 17 Speech Recognition Tutorial, introduced the Speech framework and the speech recognition capabilities available to app developers since the introduction of the iOS 10 SDK. The chapter also provided a tutorial demonstrating using the Speech framework to transcribe a pre-recorded audio file into text.

This chapter will build on this knowledge to create an example project that uses the speech recognition Speech framework to transcribe speech in near real-time.

Creating the Project

Begin by launching Xcode and creating a new single view-based app named LiveSpeech using the Swift programming language.

Designing the User Interface

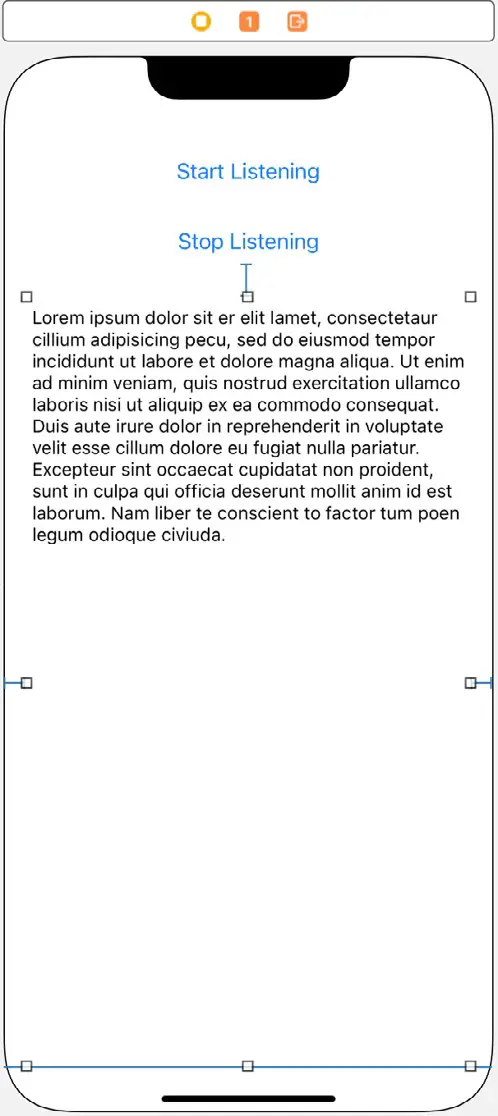

Select the Main.storyboard file, add two Buttons and a Text View component to the scene, and configure and position these views so that the layout appears as illustrated in Figure 91-1 below:

Display the Resolve Auto Layout Issues menu, select the Reset to Suggested Constraints option listed under All Views in View Controller, select the Text View object, display the Attributes Inspector panel, and remove the sample Latin text.

Display the Assistant Editor panel and establish outlet connections for the Buttons named transcribeButton and stopButton, respectively. Next, repeat this process to connect an outlet for the Text View named myTextView. Then, with the Assistant Editor panel still visible, establish action connections from the Buttons to methods named startTranscribing and stopTranscribing.

Adding the Speech Recognition Permission

Select the LiveSpeech entry at the top of the Project navigator panel and select the Info tab in the main panel. Next, click on the + button contained with the last line of properties in the Custom iOS Target Properties section. Then, select the Privacy – Speech Recognition Usage Description item from the resulting menu. Once the key has been added, double-click in the corresponding value column and enter the following text:

Speech recognition services are used by this app to convert speech to text.Code language: plaintext (plaintext)Repeat this step to add a Privacy – Microphone Usage Description entry.

Requesting Speech Recognition Authorization

The code to request speech recognition authorization is the same as that for the previous chapter. For this example, the code to perform this task will, once again, be added as a method named authorizeSR within the ViewController.swift file as follows, remembering to import the Speech framework:

.

.

import Speech

.

.

func authorizeSR() {

SFSpeechRecognizer.requestAuthorization { authStatus in

OperationQueue.main.addOperation {

switch authStatus {

case .authorized:

self.transcribeButton.isEnabled = true

case .denied:

self.transcribeButton.isEnabled = false

self.transcribeButton.setTitle("Speech recognition access denied by user", for: .disabled)

case .restricted:

self.transcribeButton.isEnabled = false

self.transcribeButton.setTitle(

"Speech recognition restricted on device", for: .disabled)

case .notDetermined:

self.transcribeButton.isEnabled = false

self.transcribeButton.setTitle(

"Speech recognition not authorized", for: .disabled)

@unknown default:

print("Unknown state")

}

}

}

}Code language: Swift (swift)Remaining in the ViewController.swift file, locate and modify the viewDidLoad method to call the authorizeSR method:

override func viewDidLoad() {

super.viewDidLoad()

authorizeSR()

}Code language: Swift (swift)Declaring and Initializing the Speech and Audio Objects

To transcribe speech in real-time, the app will require instances of the SFSpeechRecognizer, SFSpeechAudioBufferRecognitionRequest, and SFSpeechRecognitionTask classes. In addition to these speech recognition objects, the code will also need an AVAudioEngine instance to stream the audio into an audio buffer for transcription. Edit the ViewController.swift file and declare constants and variables to store these instances as follows:

import UIKit

import Speech

class ViewController: UIViewController {

@IBOutlet weak var transcribeButton: UIButton!

@IBOutlet weak var stopButton: UIButton!

@IBOutlet weak var myTextView: UITextView!

private let speechRecognizer = SFSpeechRecognizer(locale:

Locale(identifier: "en-US"))!

private var speechRecognitionRequest:

SFSpeechAudioBufferRecognitionRequest?

private var speechRecognitionTask: SFSpeechRecognitionTask?

private let audioEngine = AVAudioEngine()

.

.Code language: Swift (swift)Starting the Transcription

The first task in initiating speech recognition is to add some code to the startTranscribing action method. Since several method calls that will be made to perform speech recognition have the potential to throw exceptions, a second method with the throws keyword needs to be called by the action method to perform the actual work (adding the throws keyword to the startTranscribing method will cause a crash at runtime because action methods signatures are not recognized as throwing exceptions). Therefore, within the ViewController.swift file, modify the startTranscribing action method and add a new method named startSession:

.

.

.

@IBAction func startTranscribing(_ sender: Any) {

transcribeButton.isEnabled = false

stopButton.isEnabled = true

do {

try startSession()

} catch {

// Handle Error

}

}

func startSession() throws {

if let recognitionTask = speechRecognitionTask {

recognitionTask.cancel()

self.speechRecognitionTask = nil

}

let audioSession = AVAudioSession.sharedInstance()

try audioSession.setCategory(AVAudioSession.Category.record,

mode: .default)

speechRecognitionRequest = SFSpeechAudioBufferRecognitionRequest()

guard let recognitionRequest = speechRecognitionRequest else {

fatalError(

"SFSpeechAudioBufferRecognitionRequest object creation failed") }

let inputNode = audioEngine.inputNode

recognitionRequest.shouldReportPartialResults = true

speechRecognitionTask = speechRecognizer.recognitionTask(

with: recognitionRequest) { result, error in

var finished = false

if let result = result {

self.myTextView.text =

result.bestTranscription.formattedString

finished = result.isFinal

}

if error != nil || finished {

self.audioEngine.stop()

inputNode.removeTap(onBus: 0)

self.speechRecognitionRequest = nil

self.speechRecognitionTask = nil

self.transcribeButton.isEnabled = true

}

}

let recordingFormat = inputNode.outputFormat(forBus: 0)

inputNode.installTap(onBus: 0, bufferSize: 1024, format: recordingFormat) {

(buffer: AVAudioPCMBuffer, when: AVAudioTime) in

self.speechRecognitionRequest?.append(buffer)

}

audioEngine.prepare()

try audioEngine.start()

}

.

.

.Code language: Swift (swift)The startSession method performs various tasks, each of which needs to be broken down and explained for this to begin to make sense.

The first tasks to be performed within the startSession method are to check if a previous recognition task is running and, if so, cancel it. The method also needs to configure an audio recording session and assign an SFSpeechAudioBufferRecognitionRequest object to the speechRecognitionRequest variable declared previously. A test is then performed to ensure that an SFSpeechAudioBufferRecognitionRequest object was successfully created. If the creation fails, an exception is thrown:

if let recognitionTask = speechRecognitionTask {

recognitionTask.cancel()

self.speechRecognitionTask = nil

}

let audioSession = AVAudioSession.sharedInstance()

try audioSession.setCategory(AVAudioSession.Category.record, mode: .default)

speechRecognitionRequest = SFSpeechAudioBufferRecognitionRequest()

guard let recognitionRequest = speechRecognitionRequest else { fatalError("SFSpeechAudioBufferRecognitionRequest object creation failed") }Code language: Swift (swift)Next, the code needs to obtain a reference to the inputNode of the audio engine and assign it to a constant. If an input node is not available, a fatal error is thrown. Finally, the recognitionRequest instance is configured to return partial results, enabling transcription to occur continuously as speech audio arrives in the buffer. If this property is not set, the app will wait until the end of the audio session before starting the transcription process.

let inputNode = audioEngine.inputNode

recognitionRequest.shouldReportPartialResults = trueCode language: Swift (swift)Next, the recognition task is initialized:

speechRecognitionTask = speechRecognizer.recognitionTask(

with: recognitionRequest) { result, error in

var finished = false

if let result = result {

self.myTextView.text = result.bestTranscription.formattedString

finished = result.isFinal

}

if error != nil || finished {

self.audioEngine.stop()

inputNode.removeTap(onBus: 0)

self.speechRecognitionRequest = nil

self.speechRecognitionTask = nil

self.transcribeButton.isEnabled = true

}

}Code language: Swift (swift)The above code creates the recognition task initialized with the recognition request object. A closure is then specified as the completion handler, which will be called repeatedly as each block of transcribed text is completed. Each time the handler is called, it is passed a result object containing the latest version of the transcribed text and an error object. As long as the isFinal property of the result object is false (indicating that live audio is still streaming into the buffer) and no errors occur, the text is displayed on the Text View. Otherwise, the audio engine is stopped, the tap is removed from the audio node, and the recognition request and recognition task objects are set to nil. The transcribe button is also enabled in preparation for the next session.

Having configured the recognition task, all that remains in this phase of the process is to install a tap on the input node of the audio engine, then start the engine running:

let recordingFormat = inputNode.outputFormat(forBus: 0)

inputNode.installTap(onBus: 0, bufferSize: 1024, format: recordingFormat) { (buffer: AVAudioPCMBuffer, when: AVAudioTime) in

self.speechRecognitionRequest?.append(buffer)

}

audioEngine.prepare()

try audioEngine.start()Code language: Swift (swift)Note that the installTap method of the inputNode object also uses a closure as a completion handler. Each time it is called, the code for this handler appends the latest audio buffer to the speechRecognitionRequest object, where it will be transcribed and passed to the completion handler for the speech recognition task, where it will be displayed on the Text View.

Implementing the stopTranscribing Method

Except for the stopTranscribing method, the app is almost ready to be tested. Within the ViewController.swift file, locate and modify this method to stop the audio engine and configure the status of the buttons ready for the next session:

@IBAction func stopTranscribing(_ sender: Any) {

if audioEngine.isRunning {

audioEngine.stop()

speechRecognitionRequest?.endAudio()

transcribeButton.isEnabled = true

stopButton.isEnabled = false

}

}Code language: Swift (swift)Testing the App

Compile and run the app on a physical iOS device, grant access to the microphone and permission to use speech recognition, and tap the Start Transcribing button. Next, speak into the device and watch as the audio is transcribed into the Text View. Finally, tap the Stop Transcribing button to end the session.

Summary

Live speech recognition is provided by the iOS Speech framework and allows speech to be transcribed into text as it is being recorded. This process taps into an AVAudioEngine input node to stream the audio into a buffer and appropriately configured SFSpeechRecognizer, SFSpeechAudioBufferRecognitionRequest, and SFSpeechRecognitionTask objects to perform the recognition. This chapter worked through creating an example app designed to demonstrate how these various components work together to implement near-real-time speech recognition.